|

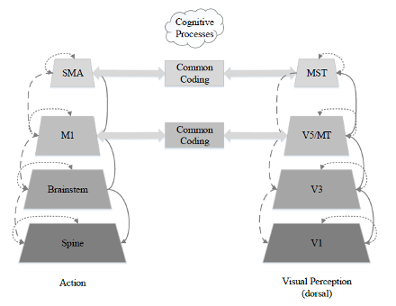

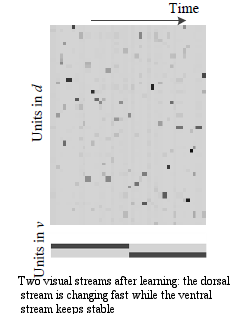

.The brain comprises hierarchical modules on various physiological levels. Neural feedback signals (including lateral and top-down connections) modulate the neural activities via inhibitory or excitatory connections within/between these levels. They have predictive and filtering functions on the neuronal population coding of the bottom-up sensory-driven signals in the perception-action system. In this thesis, we propose that the predictive role of the feedback pathways at most levels of action and perception can be modelled by the recurrent connections in different artificial cognitive platforms (simulation and humanoid robots). This will be examined by three recurrent neural network models. Furthermore, the three models and experiments with them show that the recurrent neural networks are able to model feedback pathways and to exhibit the feedback-related sensorimotor predictive functions. This work was sponsored by EU Marie Curie RobotDoc project. PART I: In the first model, inspired by the study of neurobiology, we emphasize that the feedback connections facilitate a predictive mechanism to compensate for the neural delay in the two streams (ventral and dorsal) of the visual system. We model this with a novel recurrent network with a horizontal product. In the simulation, the recurrent connections give rise to the fast- and slow-changing neural activations in the dorsal- and ventral-like hidden layer. Particularly the recurrent connections build a feedback channel to predict the upcoming neural activity in the dorsal-like hidden layer, while another feedback channel maintains stable neural encoding in the ventral-like hidden layer. (Fig. 1) Publication: Zhong, Junpei, Cornelius Weber, and Stefan Wermter. "Learning features and predictive transformation encoding based on a horizontal product model." Artificial Neural Networks and Machine Learning–ICANN 2012. Springer Berlin Heidelberg, 2012. 539-546. [Code] [Paper] PART II: In the second part of the thesis, a sensorimotor integration model with visual prediction is implemented, whose visual perception part is considered to be the dorsal stream representation of the first model. This further augments the visual prediction with its role of guiding motor action. Together with the action module which adopts a continuous reinforcement learning algorithm, this model allows a smooth and faster docking behaviour for a humanoid robot. Publication: Zhong, Junpei, Cornelius Weber, and Stefan Wermter. "A predictive network architecture for a robust and smooth robot docking behavior." Paladyn, Journal of Behavioral Robotics 3.4 (2012): 172-180. [Link][Code (Continuous Actor Critic)] PART III:

In the third experiment, we propose that the source of the feedback pathway could be the high-level cognitive processes, such as pre-symbolic representations. Furthermore, the emergence of these cognitive processes and feedback-related sensorimotor functions are not independent processes but they integrate and assist each other in a hierarchical way. Therefore, we augment the first horizontal product model with additional units, called parametric bias (PB) units, as a pre-symbolic representation. In the robot experiments, we show that during the learning process of observing sensorimotor primitives, the pre-symbolic representation is self- organized in the parametric units; during prediction, these representational units act as a prior expectation which guides the robot to recognize and to expect various pre-learned sensorimotor primitives. Publication: Zhong, Junpei, Angelo Cangelosi, and Stefan Wermter. "Toward a self-organizing pre-symbolic neural model representing sensorimotor primitives." Frontiers in behavioral neuroscience 8 (2014). [Link] [Code] These three experiments demonstrate that implementation of the feedback pathways with recurrent connections can realize predictive sensorimotor functions. The emergence of these feedback pathways also accounts for the pre-symbolic representation in cognitive systems. Furthermore, we claim that the recurrent connections can be one of possible neural structures to build up the feedback pathways on the sensorimotor integration in artificial cognitive systems.

0 Comments

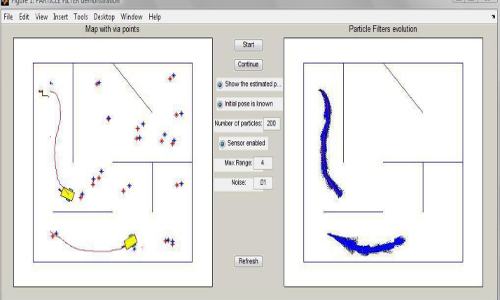

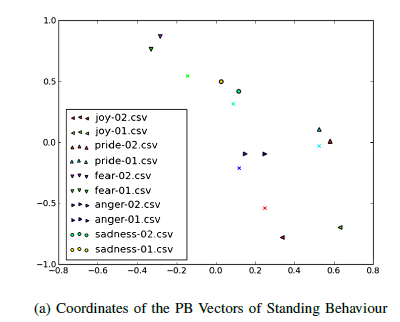

The utilization of multi-robot systems has a major advantage when comparing to single robot systems, for example, with multiple robots working together, it has the potential to accomplish a task faster than a single robot. However, when a team of robots is sharing the same worksite, the Simultaneously Localization and Mapping (SLAM) problem becomes much more difficult to resolve because a huge amount of information is needed to be processed as well as analyzed. But on the other hand, multi-robot SLAM can be more efficient if robots can exchange and share information regarding their sensed data properly. In the SLAM problem, especially for Autonomous Underwater Vehicle (AUV) and Unmanned Aerial Vehicle (UAV), it is necessary to include non-linear and non-Gaussian parameters, for which the traditional Kalman Filter (KF) cannot yield ideal solution. In applications involving non-linear and non-Gaussian parameters, Particle Filters (PF), which are based on the concept of Monte Carlo simulation, are more suitable estimation techniques. However, in problems involving multiple dimensions, such as the multi-robot SLAM problem, when a huge number of particles are being used, two problems, namely particle impoverishment and sample size dependency, will occur during the particle updating stage and these problems will become more severe. The problems will reduce the accuracy of the estimation results and resampling algorithms, such as Sequential Importance Sampling, Stratified Resampling and Systematic Resampling are used to alleviate these two problems. In my master thesis, a novel PF algorithm for tackling the particle impoverishment and sample size dependency problems is being studied and its application in a multi-robot system is examined. In this algorithm, Ant Colony Optimization (ACO) is incorporated into the generic particle filter in order to drive the proposal distribution to approximate the optimal solution. Mathematical proof and results obtained from a single variable estimation problem as well as from the robot localization problem show that, after the ACO optimization, better proposal distribution and more accurate estimation results can be obtained. In order to evaluate the performance of the ACO improved PF (PFACO) when applied to non-linear and non-Gaussian problems, such as the localization and SLAM problem, studies were conducted and utilization of PFACO algorithm for multi-robot systems was introduced. In a multi-robot environment, when two robots encounter, the same information on the same estimation problem represented by the two sets of particles will be re-evaluated based on information conveyed by particles from different sets. The particles are then merged into a single set and in such cases, parallel computing can be applied in order to reduce the processing time. By software simulation, our results are better than those from traditional approaches both in estimation error and execution time. In this work, a variant version of recurrent neural network is adopted to accomplish several tasks in non-verbal expression in emotions. This work was sponsored by EU ALIZ-E project. Part I Based on Recurrent Neural Network with Parametric Bias Units, we trained with a selection of the data on expressive human movement collected using an inertial motion capture system in the first year and analyzed subsequently. We discovered that the RNNPB has additional PB variables that act as bifurcation parameters for the non-linear dynamics. Therefore, the PB units constitute a small-dimensional space to reduce features and represent slow-changing profiles (such as emotion) of the features of body movements. Furthermore, in agreement with of our above-mentioned study on analysis of human movement and with the work presented in the previous subsection, this behavioral expression space should not be restricted to the basic emotions but should also be continuous. Part II With kinect input and coordinate mapping, in the second part of we successfully used RNNPB to identify personalised emotion with their non-verbal bahaviours. In this way, our lab colleagues brought together affect recognition, expression, and the internal parameters of the emotion model into the embodied “cognitive architecture” of the NAO robot to generate a child behaviour with various emotion expressions, since in this case affective behavioral expression and recognition are directly linked to the affective space modeling the (continuous) internal affective states of the robot and their dynamics. From the perspective of "synthetic neural modelling", the robot offers a natural way to model the embodied construals of nouns and verbs during the acquisition of the complete sentence. Therefore developing a system that demonstrates some level of cognitive ability can lead to a better understanding of the neural machinery that leads to cognitive function. During the second year of age, infants start to associate the names with the visual information that appears in the receptive field. Particularly with dynamic scenes (e.g., a man lifting a ball), with the guidance of visual attention, infants could construe the scenes flexibly, noticing the consistent action (e.g., lifting) and the consistent object (e.g., the ball). Gradually their construals of the scenes were influenced by the words from the auditorial inputs (e.g. from their parents) so that they learn how to use grammatical form of a novel word used to describe them (verb or noun), and successfully mapped novel verbs to event categories (e.g., lifting actions) and novel nouns to object categories (e.g., balls). Moreover, infants’ representations were sufficiently abstract to permit them to extend novel verbs and nouns appropriately beyond the precise scenes on which they had been taught. In the context of POETICON++ project, we conducted the robotic experiment by taking direct inspiration from this child psychology studies of verbs and nouns learning. We explore how this embodied interaction supports the learning of noval nouns and verbs. The iCub robot learns the novel object and a particular motor action with the guidance of instructor. The robot was allowed to learn part of the combination of the verbs and nouns (as shown in the table). However, the experimental result showed that the MTRNN network based neural learning allowed the robot to acquiare generalisation ability, which means that the robot is able to react with novel combinations that it has not learnt before. Further analysis also impled that the nouns and the verbs are emerged as two independent activations in the internal neurodynamics which spreads in both the spatial and temporal domains. The resulting model qualitatively captures the infant data and makes interesting predictions that are currently being explored with new child experiments. Technically, in this project, I also participated the debugging and testing of Aquila Software (Cognitive Robotics Architecture). |

RSS Feed

RSS Feed